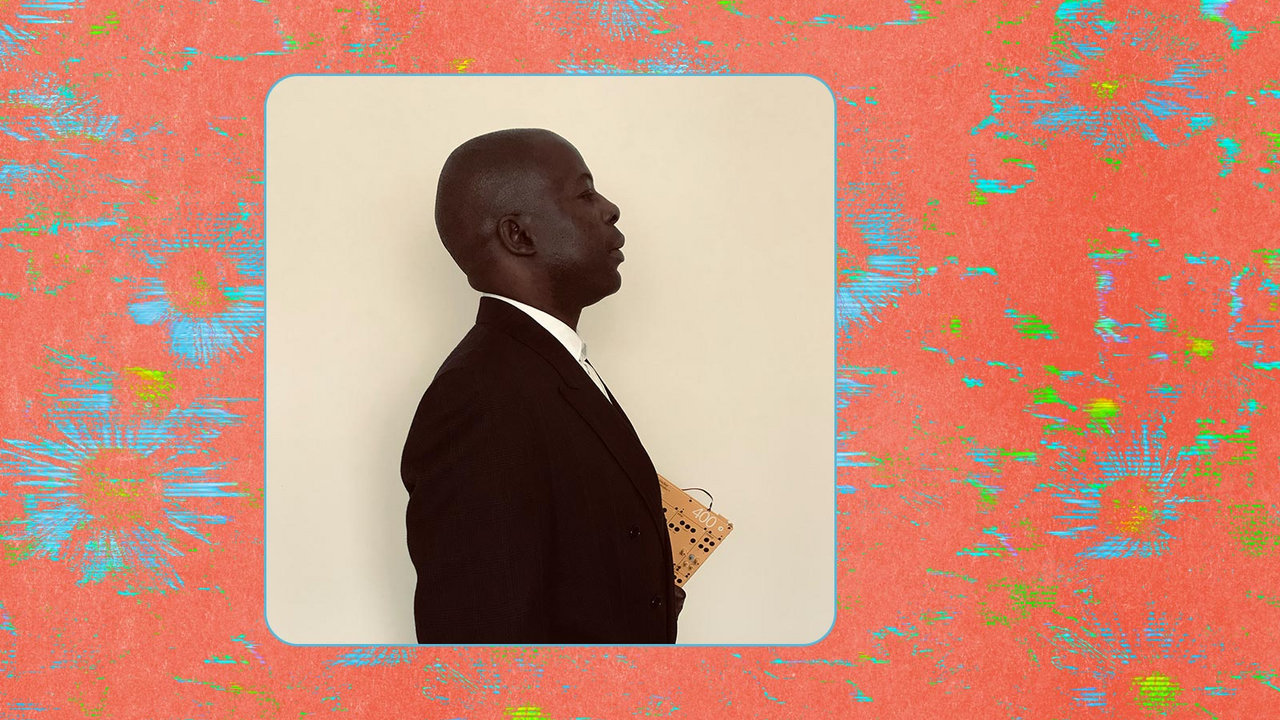

By the time you read this article, the technology it describes will be out of date, left in the dust by the warp-speed acceleration in machine intelligence we’re living through. Understandably, not all musicians are stringing up the bunting for the arrival of AI-assisted music production. Seems too much like cheating, maybe—or worse: a portent of their own obsolescence. Not so for South London producer patten. A frequent flyer in conceptual electronic airspace, patten’s CV includes live AV shows; club DJ sets; numerous philosophizing albums and EPs on Warp Records and his own 555-5555 label; and a multiplatform art project imagining the state of the world in the year 3049.

Compact Disc (CD)

His latest album is his most original project yet, if only because it couldn’t have even existed four months ago. Last December he stumbled across Riffusion, an audio version of the text-to-image AI tool Stable Diffusion, which, unlike the better known DALL-E and Midjourney, is open source and free to use. He’d already been using AI as part of his visual art practice under his real name, Damien Roach, employing machine learning to paint a series of canvases in the style of Dutch masters. So when Riffusion popped up on his timeline, he clicked through immediately, and spent the next day and a half inputting text prompts and obsessing over the bizarre units of sound it was spitting out. “I was pretty shocked at what I was managing to get it to do,” he says, remembering that it felt like discovering an “infinite sample library.”

The result, finished within two months, is a truly uncanny artefact of the now. Built entirely from Riffusion’s output, which he made into loops and then stitched into song structures, Mirage FM is familiar and unintelligible at once—a jumble of deep house, boom-bap, moody R&B, and perky pop beamed in from another consciousness, shimmering with dream logic.

The album’s miscellany includes a blast of anthemic stadium pop (“Like Rain”), psychedelic hip-hop on a Madlib tip (“Don’t Worry”), an astonishing attempt at old-school grime (“Walk With U”) that can’t decide if it’s from Bow or the Bronx, and a drowsy house groove (“Drivetime”) good enough for Galcher Lustwerk. With their glitchy, undone feel, the songs are close relatives of patten’s club edits (like his ingenious 2-step twist on Sade’s “Cherish The Day”) but he was careful to leave Riffusion’s weirdness intact: “I intentionally constructed songs that speak to this idea of their own fragmentary nature.” His prompts clearly spanned a wide range of genres, focusing on dance and pop sounds—though he won’t reveal specifics, preferring to think of them as “spells, or incantations.”

Riffusion works by converting audio from its database of sonograms—two-dimensional pictures that contain detailed information about frequencies, amplitude and time. It’s not massively high-tech, as AI goes, but the results are compelling. “I was just intrigued about what it knew about,” says Roach excitedly as we chat over Diet Cokes in a noisy London bar. “What’s in the dataset? What does it understand?”

Compact Disc (CD)

Sometimes it’s clear that Riffusion doesn’t understand much at all. The vocal timbre on “Alright,” a choppy piano ballad, sounds human enough, but the words are an indecipherable babble. It’s like staring at one of DALL-E’s creations and finding a molten fleshblob where human fingers should be. Riffusion’s audio quality is rough too, but Roach compares it to a cool lo-fi filter, or even a game of exquisite corpse, where the output is beyond your control. “There’s an opportunity afforded by a lack of resolution,” he says, comparing Mirage FM to the scalpel-cut collages he used to make from 1960s magazines, exploiting the painterly feel of the vintage print.

The biggest excitement is simply exploring inside the model, a task he likes to call “crate digging in latent space.” Imagine all of recorded music is floating around in three dimensions, with related sounds and styles clustered together: Kylie is in one spot, the Bee Gees are nearby, Goldie is further away; in another direction, jazz in all its forms, and then abstract sounds, like water dripping into a bucket. In the world of machine learning this is called “latent space,” an abstract, multi-dimensional representation of data, which maps what a neural network has learnt from its training.

“It’s like this cube: What is there?” he says, marking out the space with his fingers. “Maybe nobody’s been there before. How do you get there?” A text prompt can find previously unknown midpoints between discrete data in the space—that’s the crate digging. It’s a radical thought, says Roach, because suddenly there’s the possibility of generating ideas that couldn’t exist before, ideas that challenge the status quo: “For me that’s one of the compelling things about the potential uses of these sorts of AI systems—the construction of, essentially, anti-hegemonic forms.”

The world of AI-powered music is moving fast. While Roach was sweating over Riffusion, Google unveiled MusicLM, a high-fidelity system capable of spitting out entire songs and inventing new genres (behold “accordion death metal”). “Every other week things are being announced that were literally impossible before,” so now is the time for artists and creative thinkers—and, particularly, “people with different ethics” to the typical Silicon Valley engineer—to join the conversation about what AI should be, before it’s too late.

Roach’s job as an artist, he offers, is to “perform little operations” in order to “interrogate the way things are, and hopefully to open potential avenues for other ways of doing, being, thinking, seeing.” Things are made to “seem as solid and real as the sky is blue,” he says, “when in fact they’re very much constructed.” Our budding relationship with AI turns the interrogation back on ourselves. “We’re being forced to reckon with very fundamental questions about what we are. You know, how do our minds work? What is creativity? How are ideas made?” Riffing on the network philosophy of cybernetics, he wonders if it isn’t humans who are the real machines in this system, compared to the creative chaos of AI. “I think the way that we work is predictable—people, patterns of behavior, modes of thinking. These systems can actually help us to break out of our linearity.”