Ever since the first artificial neural network was built in 1951 by researchers at MIT, artificial intelligence has gradually muscled its way into a wide range of areas: video games, search engines, healthcare, smartphones, and transport. Now, it’s coming for music. AI programs have already learnt how to imitate Bach and the Beatles, and at the end of last year, researchers even trained a neural network to produce “original” metal in the vein of Krallice, Meshuggah, and the Dillinger Escape Plan. Yet while the doom-mongers are predicting that even human creativity will eventually be made obsolete by robots, a growing wave of artists are using AI and algorithms to take their own music in new and exciting directions. Some are using machine learning to teach software to compose music they later play themselves, while others are using live coding to program electronic music that’s improvisational, unpredictable, and surprisingly human. Either way, the 10 artists in this list are not only harnessing high-technology to make unique and progressive music, they’re also showing how the rise of AI doesn’t necessarily mean the death of creativity.

Belisha Beacon

This Is Fine

One of last year’s best examples of live coding, Belisha Beacon’s debut This Is Fine uses the ixi lang programming language to create minimal, looping techno. Its five tracks build gradually, which is the result of Beacon writing one line of code, allowing the pattern it generates to set the spectral mood, and then writing another. This process is wielded to powerful effect on the album’s opener, “Wishful Sinking.” Over 15 deceptively intense minutes, Beacon layers brisk plinky riffs, glassy beats, and insistent rhythms into one dizzying mix, making it as ideal for meditative headphone listening as for cutting loose at an Algorave.

Happy Valley Band

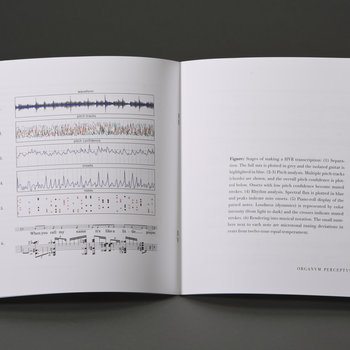

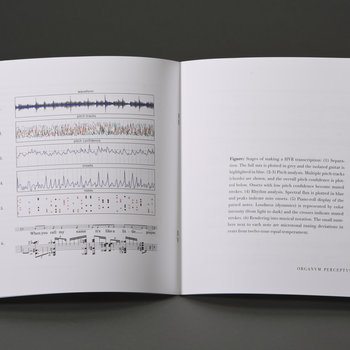

ORGANVM PERCEPTVS

Vinyl LP

While the Happy Valley Band’s debut record, ORGANVM PERCEPTVS, is comprised of renditions of such pop classics as “Like a Prayer,” “It’s a Man’s Man’s Man’s World,” and “Born to Run,” they aren’t an ordinary covers band. The 11 tracks on their debut were transcribed by a custom-made machine learning program that was taught to “unmix” its source material and then jigsaw it back together into musical notation. Yet rather than being carbon copies of Madonna or James Brown, what the Happy Valley Band end up performing via rich orchestration is skewed, jittery cacophony. It’s equal parts bewildering and inspiring, highlighting how AI can help humanity see the familiar from a fresh perspective.

Iván Paz

Visions of Space

Vinyl LP

Iván Paz is another artist who makes extensive use of live coding, yet the Mexican composer’s unsettling Visions of Space from May is also inspired by techniques employed in AI research. The album’s droning yet often harsh electronic soundscapes were put together using musical algorithms whose parameters Paz varies sequentially through time, in much the same way that the parameters controlling an artificial intelligence are altered by the process of learning. Yes, this is all too abstract to express sufficiently in a single paragraph, but the unnerving, sinister power of the dystopian title track alone is enough to prove it’s an effective method.

Daniel M Karlsson

Expanding and overwriting

Cassette

Perfectly encapsulating the hope/fear that AI could serve as a catalyst for human development, Swedish producer Daniel M Karlsson is a transhumanist and singularitarian whose glitchy IDM is based heavily on algorithmic composition. His LP from last June, Expanding and overwriting, hits the listener with chaotic volleys of beats, keys, and samples. All of them are frantic and fractured, made all the more inhumanly complex by the TidalCycles live coding language that allows Karlsson to cue patterns together simply by typing text.

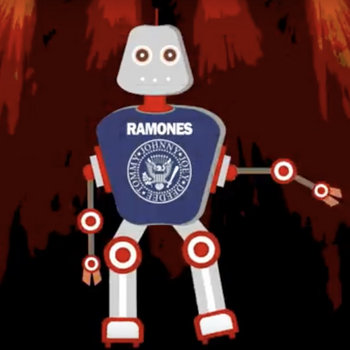

The RAiMONES

I’m Alive!

While the RAiMONES’s I’m Alive! is perhaps the least interesting record on this list from a narrowly musical perspective, it presages a future in which AI will enable musicians and pop stars to live forever (figuratively speaking, of course). The result of a Swiss engineer training an artificial neural network on 130 songs recorded by the actual Ramones, the single and its B-side “Mental Case” are AI-generated imitations of the kinds of track the seminal punk band might have produced had they not broken up in 1996. Their simple three-chord verses and two-note riffing may hold few musical surprises, yet their infectiousness suggests that in the future our musical idols will increasingly “record” beyond the grave.

sevenism

red blues

In contrast to records composed by AI or algorithms, red blues by U.K. producer sevenism is a little different, in that the role of artificial intelligence revolved around synthesizing entirely new instruments. The album was made using NSynth, a Google-made program that uses machine learning and neural networks to fuse samples of instruments into new sounds. This gives the 16-track LP an otherworldly, alien atmosphere, as the program combines the “underlying qualities” of a vibraphone and clarinet, for example, to create swirling, cavernous drones.

Miri Kat

Pursuit – تلاش

A rising fixture in London’s underground live coding scene, Miri Kat released her debut EP Pursuit – تلاش at the beginning of December. Not only was its restless post-industrial beauty live coded using a variety of open-source programs, but it also benefits from Kat’s experience as an engineer of electronic musical instruments. This means that such tracks as “d1574n7” and “fl33” shift and unfurl with the manic energy of much algorithmic music, yet they also have an ethereal quality that rewards closer listening.

Algobabez

Burning Circuits

Cassette

Of all the acts working in the live coding and algorave scene, Algobabez produce some of the most danceable music. Despite the tongue-in-cheek name, the U.K. duo’s Burning Circuits album from last April is a cerebral effort that marries pounding EDM with knotty braindance. Its two 20-minute tracks use live coding to generate convulsive epics, showing that despite the abstract coldness of its method, algorithmic music can be highly visceral.

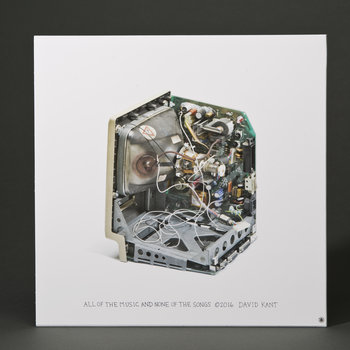

Automatonism

AUTOMATONISM #2

Automatonism is the most recent pseudonym for Swedish producer Johan Eriksson, a PhD student who’s focused on “making music with self-playing machines.” It’s also the name of the modular synthesizer Eriksson has engineered, which he showcases via his two self-titled releases from last year. On AUTOMATONISM #1 and #2, generative algorithms create spiky IDM that bubbles and froths electronically, its scatter-gun beats invoking a world where the products of machines become too much for humans to fully process.

WK569

Omaggio a Marino Zuccheri

Vinyl

WK569 are an Italian trio who in October released Omaggio a Marino Zuccheri, an homage to the sound engineer of the same name who worked at the hallowed RAI Electronic Music Studio in Milan. Focused on the “interaction of man and machine,” the single-track EP sees the threesome feed the self-generating output of structured algorithms into a number of vintage synths and samplers. The result is at times obliquely beautiful and at others almost terrifying, as jagged electronic notes flutter, collect, and scatter unpredictably over the course of 18 gripping minutes.